I'm out of my depth with technical RDBMS errors. : (_mysql_exceptions.OperationalError) (1205, 'Lock wait timeout exceeded try restarting transaction') (Background on this error at: ) Reraise(type(exception), exception, tb=exc_tb, cause=cause)įile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1193, in _execute_contextįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/default.py", line 508, in do_executeįile "/usr/local/lib/python2.7/site-packages/MySQLdb/cursors.py", line 250, in executeįile "/usr/local/lib/python2.7/site-packages/MySQLdb/connections.py", line 50, in defaulterrorhandler Return connection._execute_clauseelement(self, multiparams, params)įile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1060, in _execute_clauseelementįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1200, in _execute_contextįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 1413, in _handle_dbapi_exceptionįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/util/compat.py", line 203, in raise_from_cause Result = conn.execute(querycontext.statement, self._params)įile "/usr/local/lib/python2.7/site-packages/sqlalchemy/engine/base.py", line 948, in executeįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/sql/elements.py", line 269, in _execute_on_connection Return self._execute_and_instances(context)įile "/usr/local/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2958, in _execute_and_instances filter(or_(*filter_for_tis), TI.state.in_(resettable_states))įile "/usr/local/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2783, in allįile "/usr/local/lib/python2.7/site-packages/sqlalchemy/orm/query.py", line 2935, in _iter_ Self.reset_state_for_orphaned_tasks(session=session)įile "/usr/local/lib/python2.7/site-packages/airflow/utils/db.py", line 50, in wrapperįile "/usr/local/lib/python2.7/site-packages/airflow/jobs.py", line 266, in reset_state_for_orphaned_tasks Here's the traceback from Stackdriver: Traceback (most recent call last):įile "/usr/local/bin/airflow", line 27, in įile "/usr/local/lib/python2.7/site-packages/airflow/bin/cli.py", line 826, in schedulerįile "/usr/local/lib/python2.7/site-packages/airflow/jobs.py", line 198, in runįile "/usr/local/lib/python2.7/site-packages/airflow/jobs.py", line 1549, in _executeįile "/usr/local/lib/python2.7/site-packages/airflow/jobs.py", line 1594, in _execute_helper The error is very similar to what I've also had before. This time, there is a CrashLoopBackOff and the scheduler pod cannot restart anymore. The scheduler container crashes sometimes, and it can get into a locked situation in which it cannot start any new tasks (an error with the database connection) so I have to re-create the whole Composer environment. You need to enable persistence volumes: Enable persistent volumes enabled: true Volume size for worker StatefulSet size: 10Gi If using a custom storageClass, pass name ref to all statefulSets here storageClassName: Execute init container to chown log directory.I've been using Google Composer for a while ( composer-0.5.2-airflow-1.9.0), and had some problems with the Airflow scheduler. Test: Īfter that check that fixPermissions is true. Please let me know if additional info is needed.īelow is my docker-compose.yml for the scheduler/webserver/flower: version: '3.4' It is either read-protected or not readable by the server. You don't have the permission to access the requested resource. I've also tried to copy the URL in the error, and change replace the IP with the host machine ip, and got this message: Forbidden Connection refusedīut sometimes still give "Temporary failure in name resolution" error.

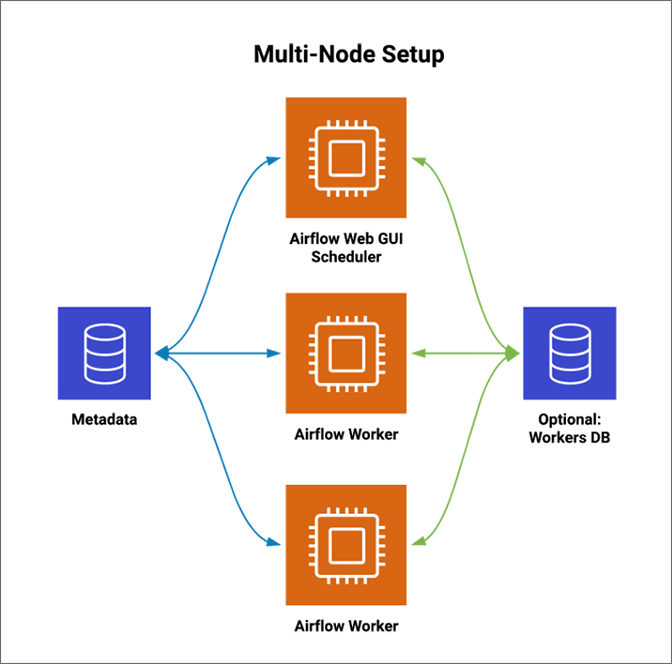

Then I tried map the port 8793 of the worker with its host machine (in worker_4 below), now it's returning: *** Failed to fetch log file from worker. *** Failed to fetch log file from worker. Then I tried replaced AIRFLOW_CORE_HOSTNAME_CALLABLE: 'socket.getfqdn' with AIRFLOW_CORE_HOSTNAME_CALLABLE: '_host_ip_address' *** Fetching from: *** Failed to fetch log file from worker. I was able to get the workers up and running, flower is able to find the worker node, the worker is receiving tasks from the scheduler correctly, but regardless of the result status of the task, the task would be marked as failed with error message like below: *** Log file does not exist: /opt/airflow/logs/test/test/T14:38:37.669734+00:00/1.log Now I want to expand, and set up workers on other machines. I just recently installed airflow 2.1.4 with docker containers, I've successfully set up the postgres, redis, scheduler, 2x local workers, and flower on the same machine with docker-compose.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed